AI infrastructure I built for myself - and use every day.

Multi-provider LLM orchestration, MCP tooling, and document intelligence on Cloudflare Workers.

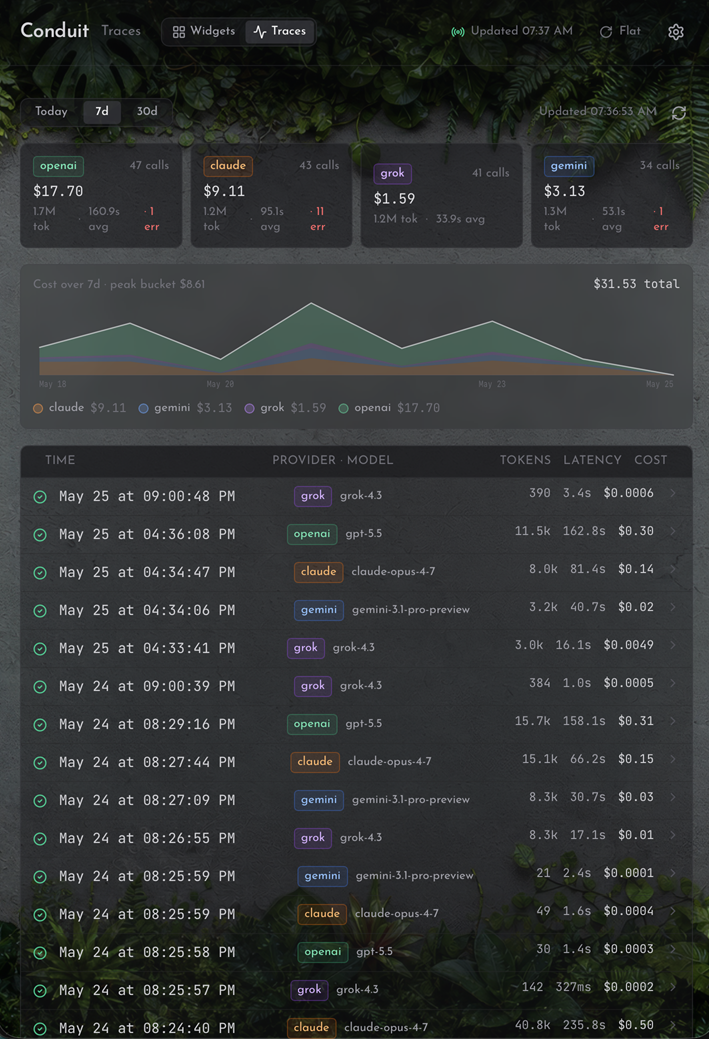

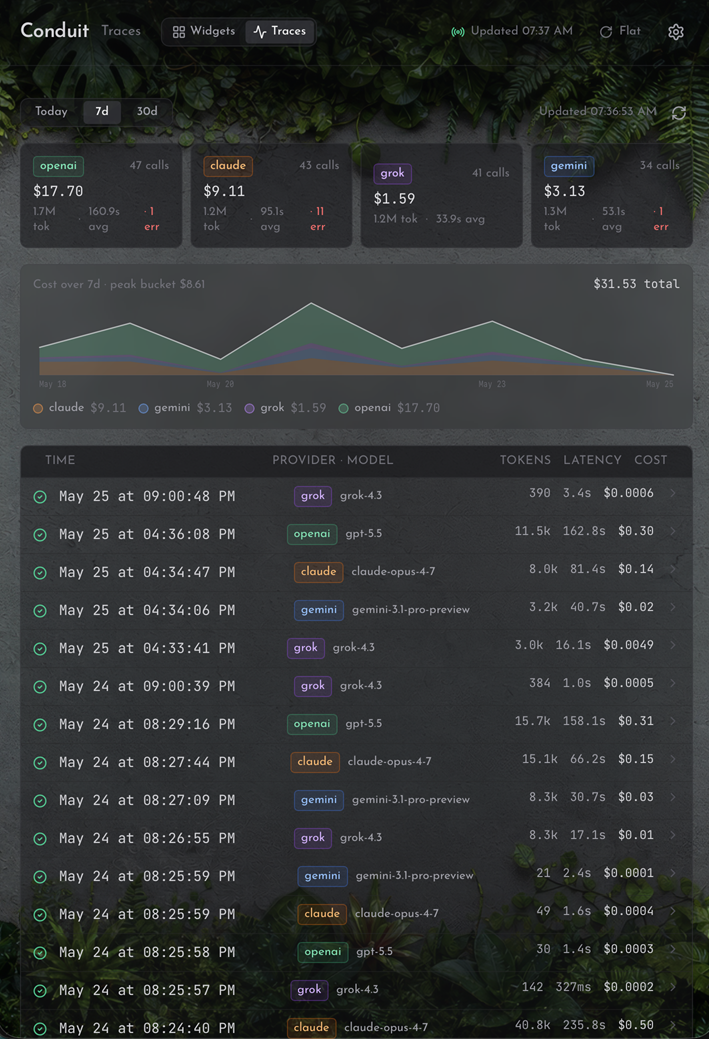

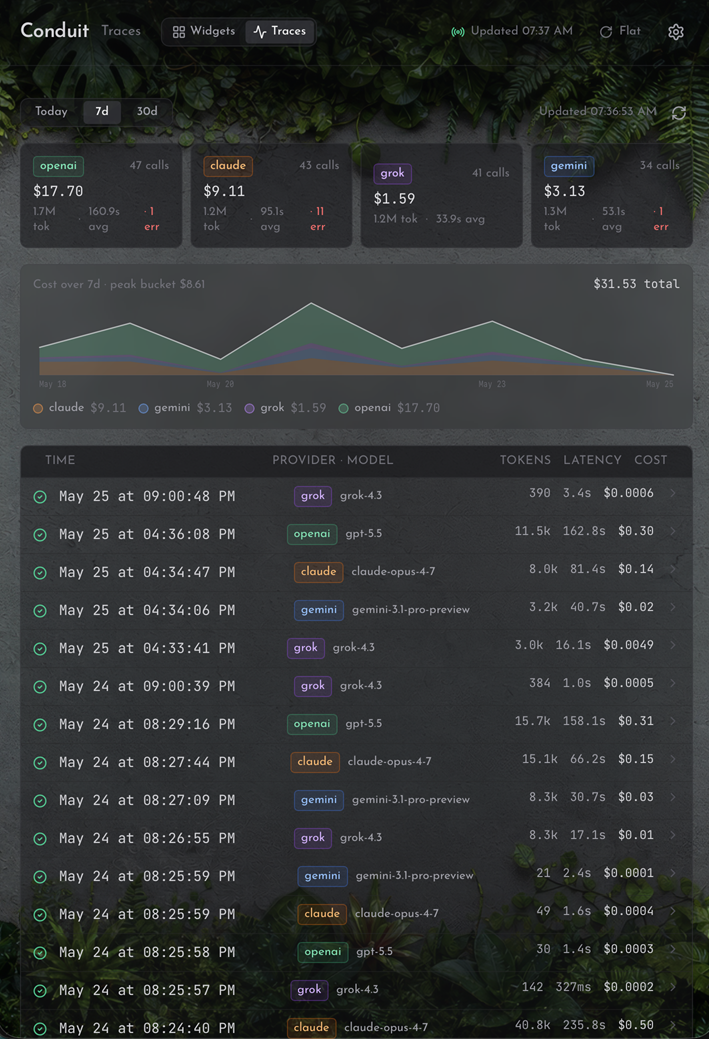

Pictured: the traces view - cost, tokens, and latency on every model call.

Multi-provider LLM orchestration, MCP tooling, and document intelligence on Cloudflare Workers.

Pictured: the traces view - cost, tokens, and latency on every model call.

Everything routes through a single Cloudflare Worker backed by D1, KV, and R2. No containers. No uptime monitoring. No infrastructure to babysit.

Live surfaces sit behind Cloudflare Access. Happy to walk through a demo.

Route the same prompt to OpenAI, xAI, Gemini, and Claude with provider-specific adapters. Compare responses side by side, pick the best output, switch providers without touching application code. The arena at tools.condi.dev makes this interactive.

Every send_to_llm call is traced: cost, tokens, and latency, stamped per provider and model. A live spend chart and a per-call ledger turn "what is this costing me" into a number, not a guess. The same observability layer the 2026 AI-gateway products converged on.

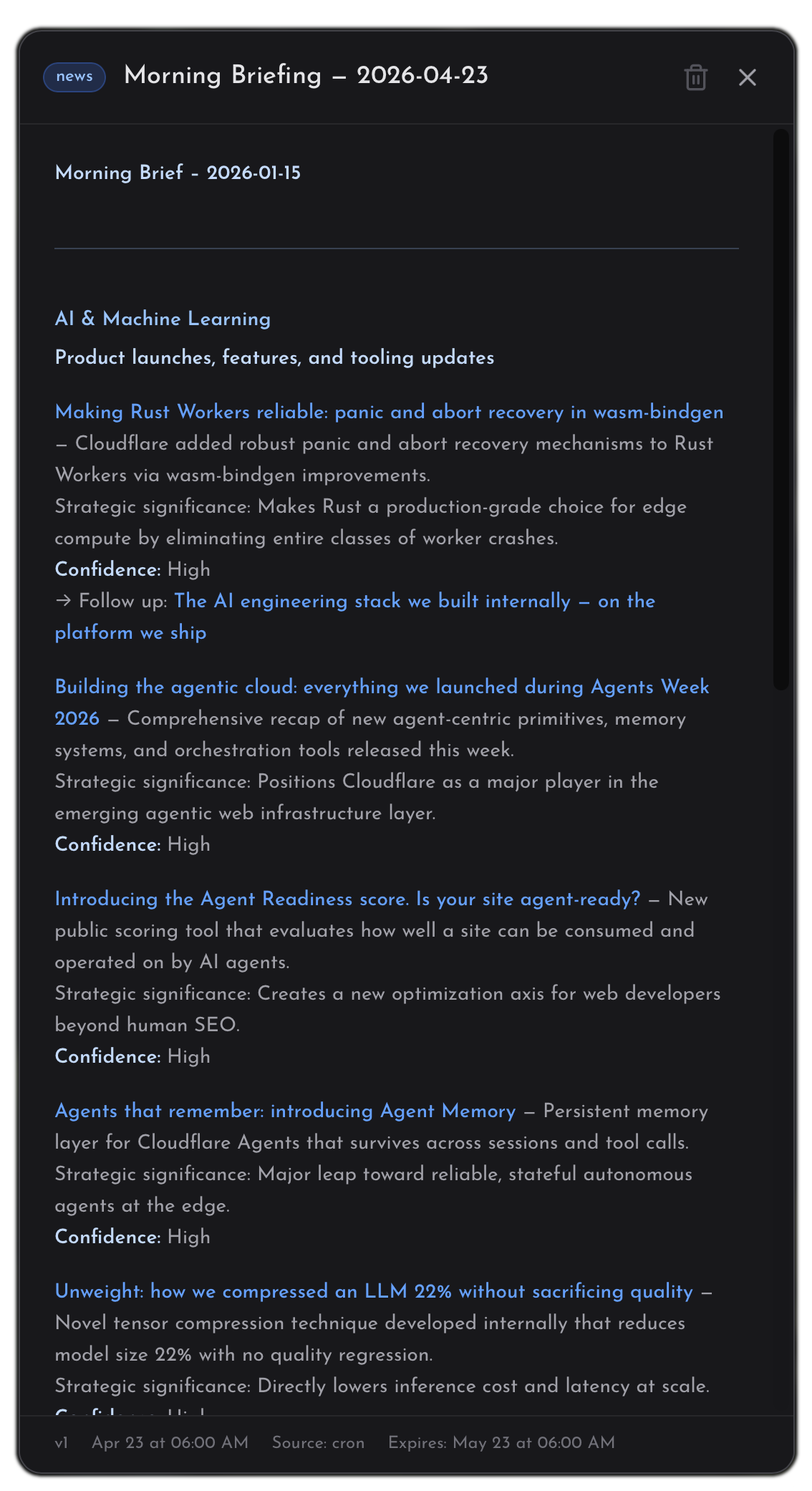

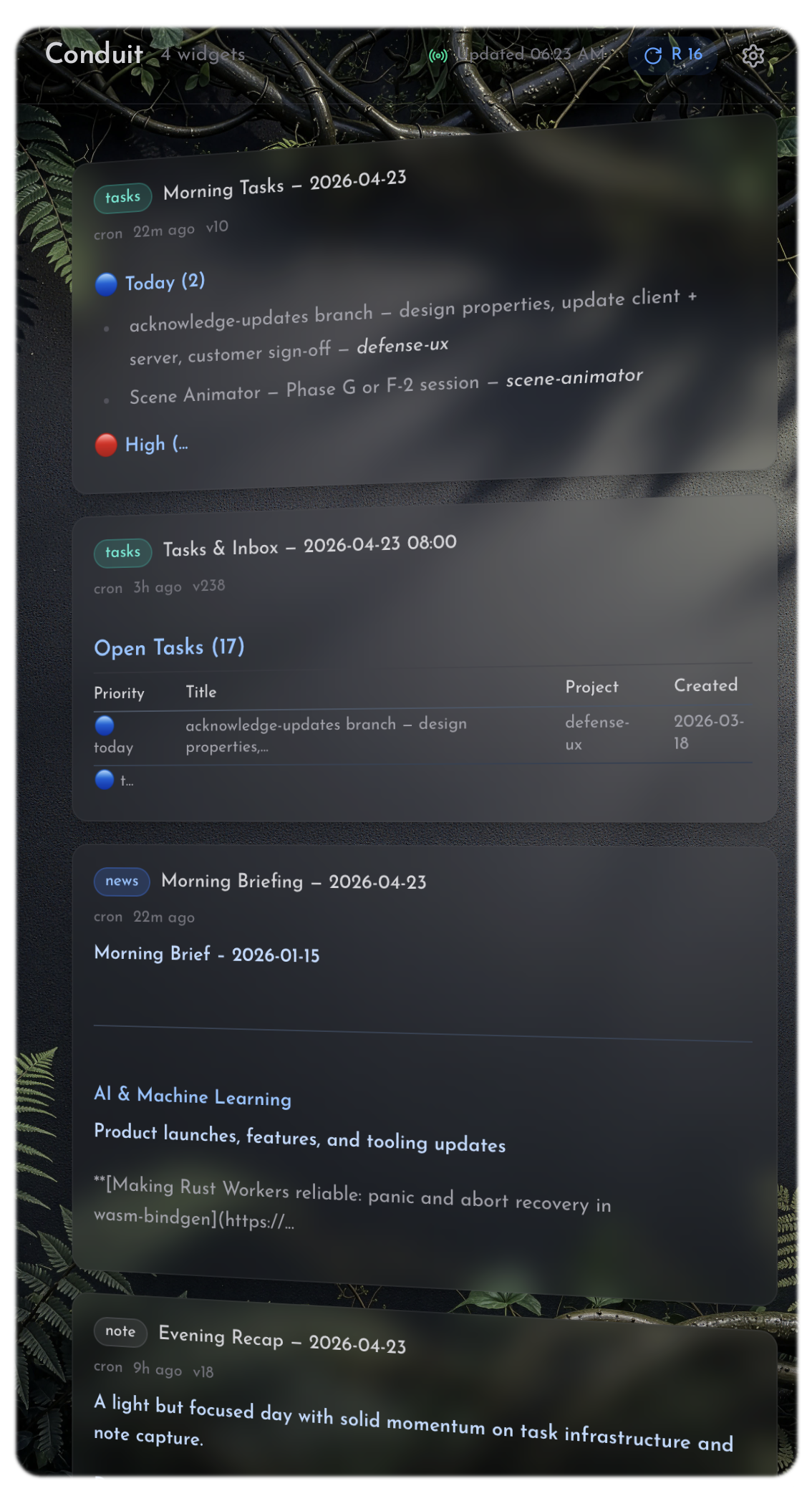

A slot-based, cron-driven information dashboard. Morning news briefings, task summaries, evening recaps - all generated by scheduled AI jobs and published to named widget slots. ETag-cached polling keeps it snappy; a time-travel archive scrolls back through previous versions.

Around two dozen tools exposed via Model Context Protocol - context ingestion, task tracking, project management, full-text search, cross-model workflows, email, dashboard publishing. Connected to Claude Code and Claude.ai as a live integration, not a demo.

Content goes in messy - meeting notes, research dumps, raw captures. Conduit tags and distills it into LLM-ready context, chunks it for RAG retrieval, and stores relations as a first-class graph so references don't get lost. Retrieval is FTS5 full-text search across everything, not keyword matching against filenames.

Conduit Fabric handles task management, context capture, document intelligence, and multi-provider LLM routing across every working session. The dashboard runs cron jobs at 6am, noon, and 9pm - morning briefing, task triage, evening recap.

The MCP server is connected to Claude Code and Claude.ai right now. Work flows through Conduit.

20+ years building defense software, simulation systems, and training platforms. Currently exploring what happens when one engineer treats AI tooling as infrastructure instead of a novelty.

Before Conduit, my AI setup was a pile of disjointed MCP servers - hard to update, capabilities scattered across terminals, apps, and browsers, nothing speaking to anything else. Conduit started as the cleanup. It turned into the system I work through every day: one place to capture context, track tasks, route prompts across providers, and publish back to a dashboard I actually read.

Want to talk AI-augmented workflows, MCP tooling, or Cloudflare architecture?

conduit@condi.dev